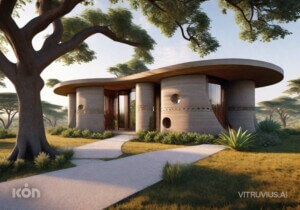

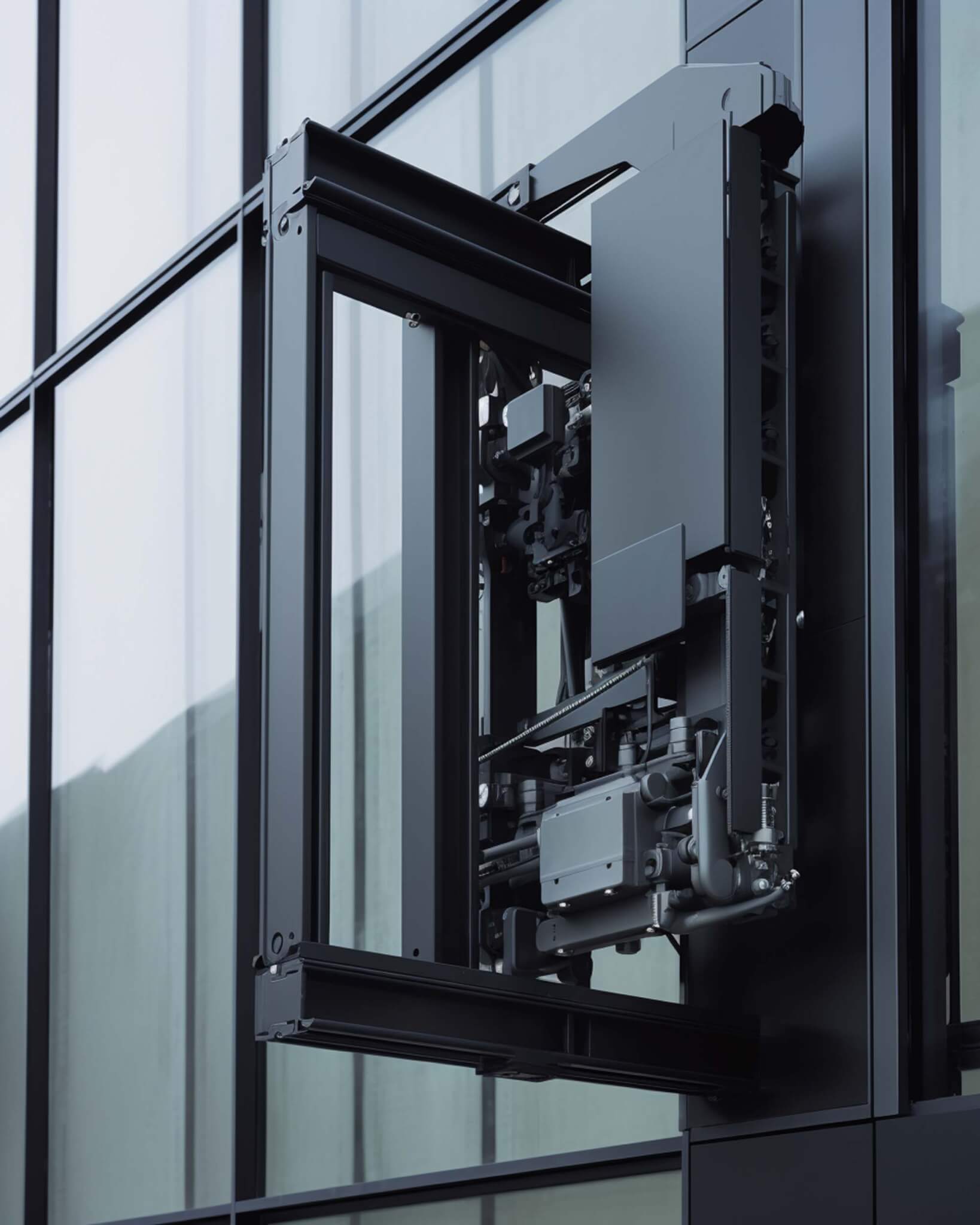

Olivier Campagne operates a digital-image studio in Paris. Educated as an architect, he has spent decades making renderings. Recently, he began creating AI images using Midjourney and posting them on a dedicated Instagram account, @oliver_country. The images advance the architectural imagination he’s been exploring in commissions from European architects like Bruther, Baukunst, and Arrhov Frick. Guided by his eye for mechanisms and appreciation for photography, the scenes are technical, industrial, and minimal, yet still dreamlike. Campagne said that while a handful of images were color-corrected, the vast majority of his posts are straight from Midjourney with no alterations or cropping. AN’s executive editor Jack Murphy spoke with him about his process.

AN: Can you tell me about your background and your professional work with architects?

Olivier Campagne: I graduated and then immediately began making images. I was more excited to do this than to be a practicing architect. I started to make 3D renderings, and I’ve been doing it for about 20 years now.

How do you work together with your clients to get the right mood or shot composition?

I receive sketches, but the bulk of my job is to look around to find and propose views, and then we discuss. I think like a photographer trying to shoot this imaginary building, so I’m looking for framing and composition. With some clients, I don’t receive 3D models, so I work out the details. I try to bring technical details into the image that may not be there yet at that stage of the project.

How did you begin exploring image making using AI?

It’s quite recent for me. There has been a lot of growth, and the quality you can produce with Midjourney is stunning. I remember DALL-E from a year ago, which was funny, because you could immediately see what was wrong with the image. It’s interesting that I’m seeing lots of things like inflatable structures or curtains, which are hard to model yourself. I was doing stuff like this, but afterward I started to focus more on details and mechanisms and how this can mix with architectural elements. It’s hard to manipulate things exactly; it’s more like playing a game. There’s randomness involved, and you don’t get what you expect. And unless you use certain code, you won’t get the same image when you regenerate. It can be frustrating.

But I also like the surprise that arrives from throwing the dice. With photography, there are surprises that happen, like accidents you don’t expect. When you render an image, you go step by step and will not be surprised. So in a way, AI gives me what’s missing in rendering.

Have you mixed AI imagery and rendering yet?

It’s difficult to merge these two interests right now. I’m keeping these experiments separate from my work. It does raise issues about copyright. For example, you can bring in any image into the prompt. I try to avoid this, because then you’re making a photograph from another nice photograph. I don’t know by what magic it works, but then the starting point can completely disappear into the image, but somehow, it’s still there in the color.

I try to concentrate on fully formed images because I think that’s the way I get the most surprise. There’s a lot of training involved. What’s on my Instagram feed is less than 1 percent of the images I make. It’s close to programming, in that you modify the prompt and relaunch. If you like programming and ghostly visuals, it can be quite addicting.

Has this started to influence your professional image making?

Maybe in a more unconscious way, because it pushes you to be more critical about what you’re doing. It sends you back to university, in a way. It helps me understand photography more because you have to have a way that you look at your work. Since I’ve been doing AI, I’ve become stricter. You’re constantly filtering images, and this trains your brain to look for the things you don’t like, so it’s refining your vision. And then when you go back to your own work, you get more severe and then you need to be more precise.

Are you using AI to generate parts of your renderings yet? I know there are tools like this for Photoshop Beta.

The main issue is resolution; it looks good on screen, but when you zoom in, it’s tricky. There are options in Photoshop Beta, and even before the use of AI to make images there was the content-aware fill tool. I’m sure there will be some development to extend AI into making 3D models. But for now, if I would use it, it would be for small things like putting people in backgrounds, because it is very good at lighting, so this would save some time.

How do you think AI relates to the relationship of architecture and photography? Is there any change to how these two creative disciplines relate to each other?

I had the feeling a couple months ago like we were living in a revolution which is maybe comparable to when photography was invented. People said, “Painting will disappear,” but that was not the case. My friend reminded me that when color photography was invented, suddenly black-and-white photography became art. Before AI there was all this old, numeric, 3D digital imagery, and that will become more like art.

To come back to the question of inflatable structures and curtains, you can ask: How was the architecture of the last years addicted to things that were possible in the computer? For example, if it’s easier to create soft or inflatable things, will architecture be directed by that? When you render glass, it’s quite easy, but it can make for a fascinating rendering. We’ve seen architecture become more and more influenced by the kinds of images that we can produce. There’s a link there, so maybe AI will introduce a new twist, and then new architectures become possible.

How could AI help create videos?

Video AI needs to be tested more because it’s not yet that good. There’s a lot of work to do in terms of consistency. I’ve tried it, but I’m not yet into it. Already, making a single image takes so much time to be happy, so making frames for a movie is something else. It takes time. To get to each image I publish, it sometimes takes hours of rendering. It’s not like automatic image processing.

You mentioned you post less than 1 percent of what you create. How do you get to what you call a finished image?

Maybe I have something in mind and then I translate it into text and hit Enter. It gives you an image, and then you say, “OK, that’s what I described but not what I had in mind.” There are frustrating things, like if you type “ceiling,” then Midjourney understands it will be an interior, because if you’re outside you like to not see a ceiling. There are logical issues like this.

I recently tried to make short prompts—like just four words. It tends to increase the surprise. You can generate text using ChatGPT and then use it to create images in Midjourney, but I realize that when you have so many words it’s not going to be a precise image, because it picks random stuff in the prompt. When you have fewer words, and you don’t use any images in the prompt, you get sharp images, so when you see blurry AI images, it might mean that it was generated using an image. I’ve been trying to create super sharp results. Like if you just type “small cabin on the rocks,” then you get a clear image. Maybe I didn’t like the result, but the image is sharp. I’m looking at the image down to the pixel.

While exploring this “artificial landscape” through capturing snapshots and documenting it, I am primarily curating my own production. The selected image would be the one where everything seems to resonate: architecture, framing, composition, and light.